In The Role of Databases for Strategic Planning – Some General Remarks, we have looked at the increasing role databases seem to have in strategic planning and the difficulties that arise in including future uncertainties in this kind of planning.

It appears quite impractical to get all the contributors to include possible future uncertainties in their input. First of all, they would have to generate massive amounts of data, costing them significant time beside their everyday work, which is often in marketing, market resarch or sales rather than planning. Second, they will probably have very different ideas and approaches to what might change in the future, making the results difficult to interpret. In addition, database structures tend to resist change, so adjusting them to possible new developments someone foresees can be a lengthy and resource-intensive process.

Uncertainty based planning can, however, be implemented building a simulation on existing, single-future planning from a database, making it possible to vary assumptions ex-post and allowing the user to build his different scenarios and strategies based on the information provided by all the different contibutors to the database. The simulation should build on the infrastructure and user interfaces generally used to get information from the database, so the implementation will be quite different depending on the given framework.

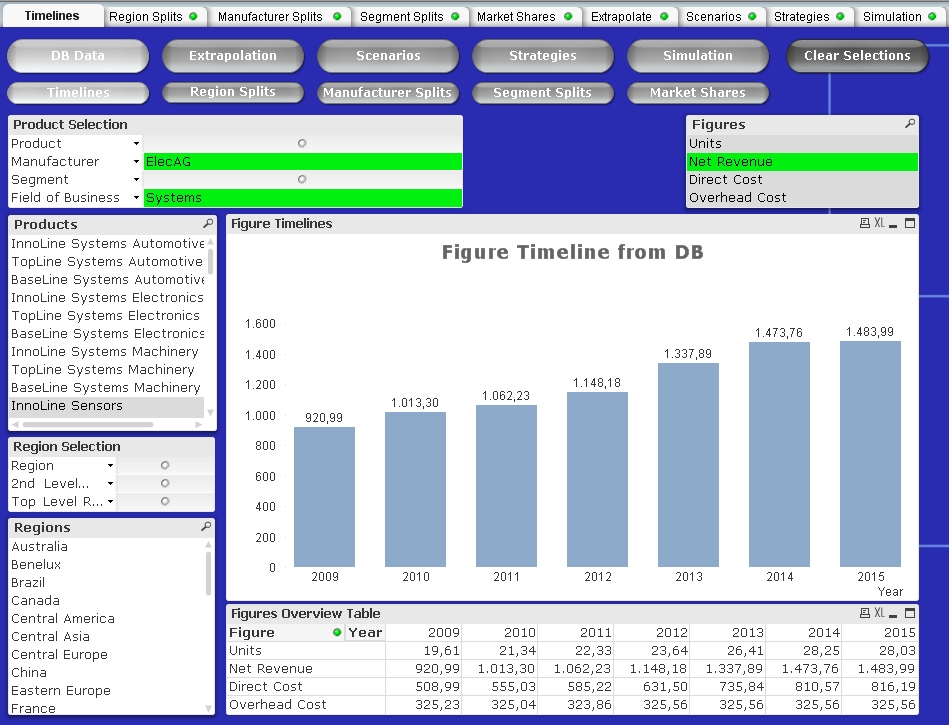

For the case study, let us look at a manufacturer of electronics components, integrated systems of these components and services around these components for different industries. 32 sales offices define the regional structure, which is grouped in 2nd level and top level regions. There are 21 product lines with individual planning, and market research gathers and forecasts basic data for 39 competitor product lines or competitor groups. Product lines are grouped into segments and fields of business. Strategically relevant figures in the database are units sold, net revenue and, available only for own product lines, direct cost and overhead cost. There are a few years of historical data besides forecasts for the coming four years, which is sufficient for the operative planning the database was intended for, but rather short for strategic decisions like the building of new plants or the development or acquisition of new products. The strategically relevant total data volume therefore is small compared to what controlling tends to generate, but rather typical for strategy databases.

In this case, we will look at a relational (e.g. Oracle – all brands mentioned are trademarks of their respective owners) database accessed through the Qlikview business intelligence tool. As Qlikview defines the only access to the data generally used by the planner, the actual database behind it, and to a certain extent even its data model, will mostly be interchangeable. The basic timeline view of our case study database looks as follows:

To effectively simulate the effects of the company’s decisions for different future developments, we have to extrapolate the data to a strategic timescale and calculate the effects of different external scenarios and own strategies. Qlikview, however, is a data mining tool, not a simulation tool. Recent versions have extended its interactive capabilities, introducing and expanding the flexibility of input fields and variables, but generally, Qlikview has not been developed to do complex interactive calculations in it. Future versions may move even more in that direction, but the additional complexity needed to include a full-fledged simulation tool would be immense. To do such calculations, one has to rely on macros to create the functionality, but Qlikview macros are notoriously slow, their Visual Basic Script functionality is limited compared to actual Visual Basic, and the macro editing and debugging infrastructure is rather… let’s say, pedestrian. Some experts actually consider it bad practice to use macros in Qlikview at all.

The only way around this limitation is to move the actual calculations out of Qlikview. We use Qlikview to select the data relevant for the simulation (which it does very efficiently), export this data plus the simulation parameters as straight tables to MS Excel or Access, run the simulation there and reimport the results.

Now, why would one want to export and reimport data to do a calculation as a macro in Excel, in essentially the same language? VBScript in Qlikview is an interpreter: One line of the macro is translated to machine code and executed, then the next line is translated and executed, using massive resouces for translation, especially if the macro involves nested loops, as most simulations do extensively. VB in MS Office is a compiler, which means at the time of execution, the whole macro has already been translated to machine code. That makes it orders of magnitude faster. In fact, in our case study, the pretty complex simulation calculations themselves consume the least amount of time. The slowest part of the tool functionality is the explort from Qlikview, which es even slower than the reimport of the larger, extrapolated data tables. In total, the extrapolation of the whole dataset (which only has to be done once after a database update) takes well under than a minute on a normal business notebook, which should be acceptable considering the database update itself from an external server may also take a moment. Changing extrapolations for single products or simulating a new set of strategies and scenarios is a matter of seconds, keeping calculation time well in a reasonable frame for interactive work.

In most cases, a strategic planner will want to work with the results of his interactive simulations locally. It is, however, also possible to write the simulation results back into equivalent structures in the Oracle database, another functionality Qlikview does not provide. In that case (as well as for very large amounts of data), the external MS Office tool invoked for the calculation is Access instead of Excel. Through the ODBC interface, Access (controlled in Visual Basic, started by Qlikview) can write data to the Oracle database, making simulation results accessible to the selected users.

The market model used for the extrapolation and simulation in the case study as rather generic. Units sold are modeled based on a product line’s peak sales potential and a standardized product life cycle characteristical for the market. A price level figure connects the units to revenue; direct cost is extrapolated as percent of revenue and overhead cost as absolute numbers. The assumptions for the extrapolation of the different parameters can be set interactively. Market shares and contibutions margins are calculated on the side, leading to the following view for the extrapolation:

Of course, depending on the market, different and much more complex market models may be necessary, but the main difference will be in the external calculations and not affecting the performance visible to the user. Distribution driven markets can include factors like sales force or brand recognition, whereas innovation driven markets can be segmented according to very specific product features. Generally, the market model should be coherent with the one marketing uses in shorter term planning, but it can be simplified for the extrapolation to strategic timescales and for interactive simulation.

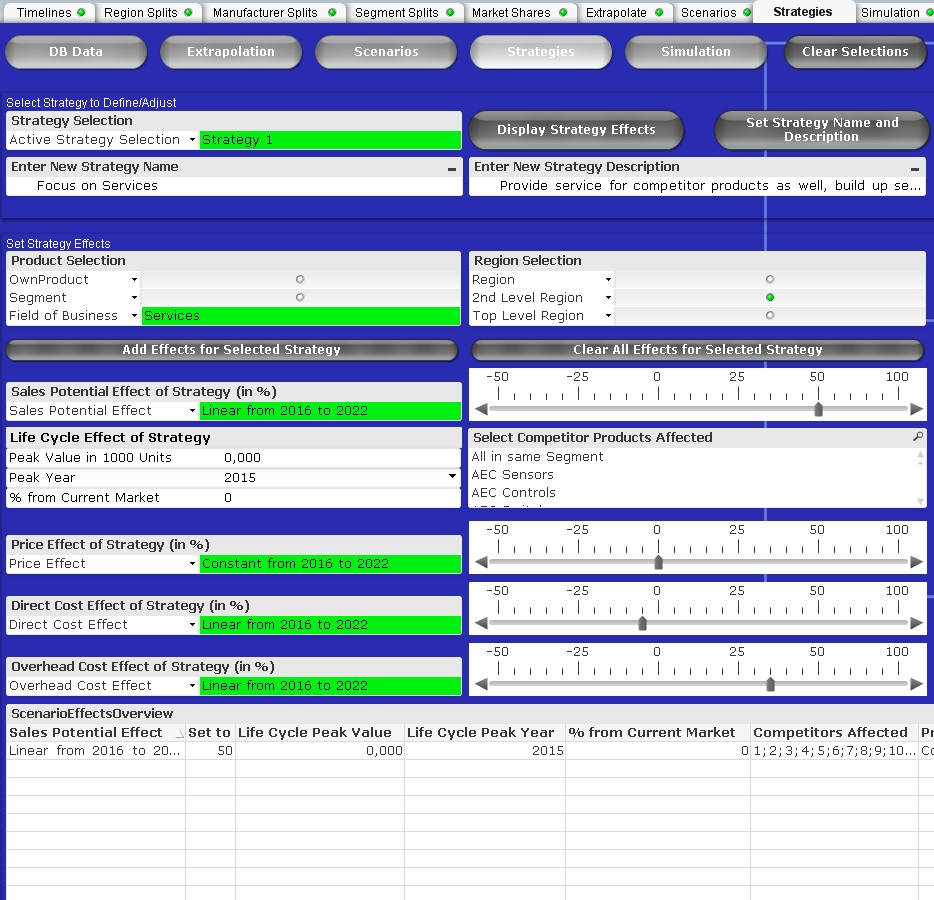

If a strategic simulation is developed for a specific, single decision, scenarios with very specific effects including interferences between different scenarios and strategies can be developed in the project team and coded into the tool. In the present case study, a planning tool for long-term use in corporate strategy, both scenario and strategy effects are defined on the level of the market model drivers and can be set interactively. Ten non-exclusive scenarios for future developments can be defined, and for each scenario, ten sets of effects can be defined for selected groups of regions and products. Scenarios affect both own and competitor products and can have effects on sales potential, price, direct and overhead cost.

Strategy definitions are very similar to scenario definitions, but strategies can only affect sales potential and price of own product lines. To account for the development or acquisition of new products, they can also add a life cycle effect, which can either expand the market or take market shares from selected or all competitors in the respective segment.

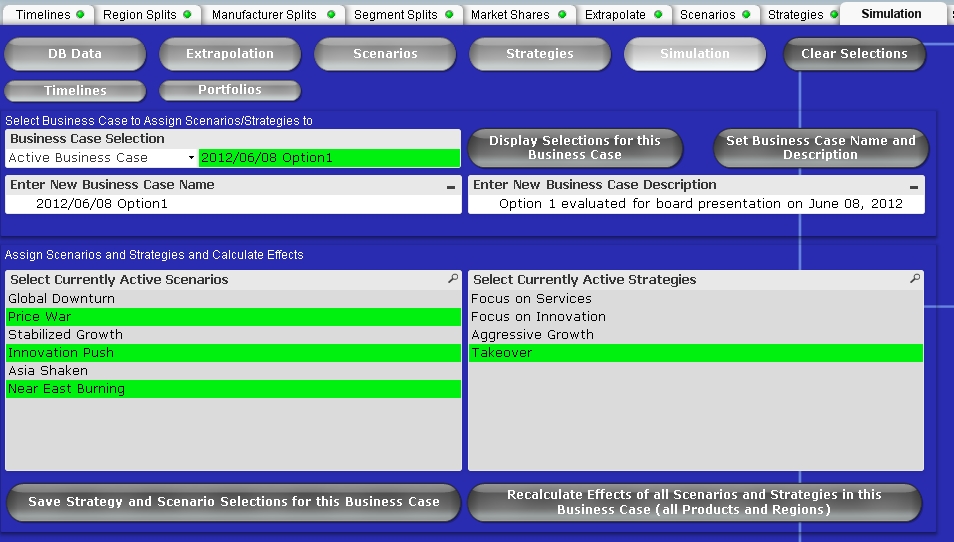

As opposed to classical scenario theory, the scenarios in this case study are non-exclusive, so the impacts of different scenarios can come together. Strategies can also be combined, so a strategy of intrinsic growth could be followed individually or backed up with acquisitions. A predefined combination of scenarios and strategies can be stored under a business case name for future reference.

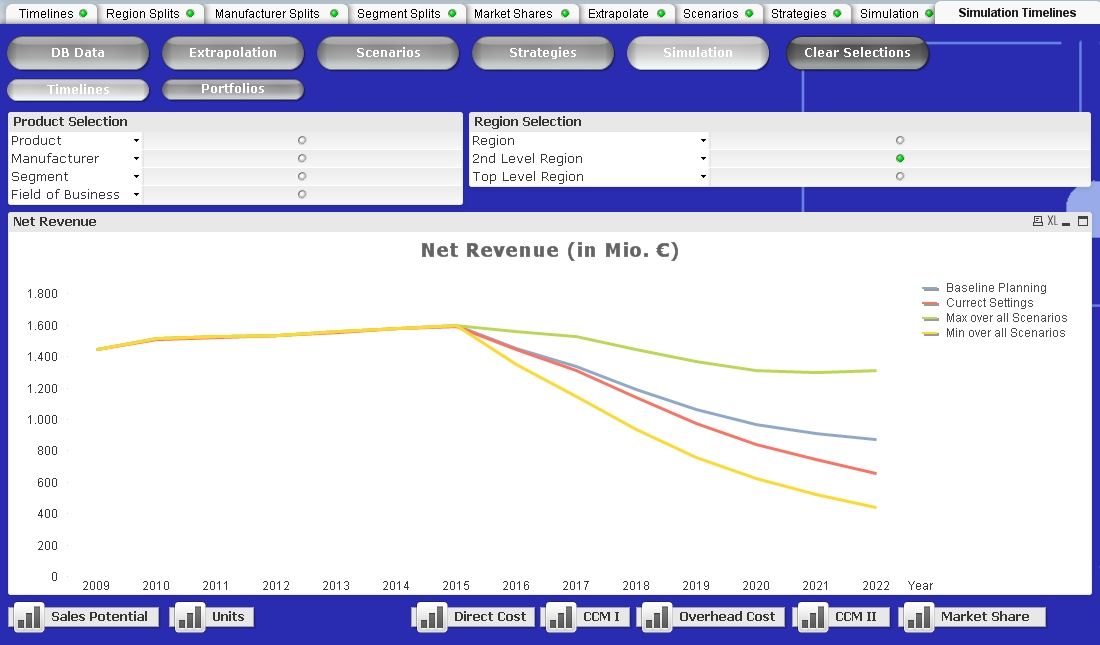

Various visualizations of the evaluated data are automatically generated for interactive product and region selections using the Qlikview tools. In this case study, the linear timeline graph displays the baseline planning in comparison to the timeline after the current scenario and strategy settings for a selected figure. For certain figures the maximum and minimum values over all scenarios for the selected strategy are also displayed.

Risk portfolios can display expected values vs. risk (variation all scenarios) for a selected strategy. Qlikview makes creating these portfolios for selected product and regions and aggregated over adjustable parts of the timeline very convenient. As desired, other types of portfolio views (e.g. market share vs. market size) can also be created.

In all these cases, it must be kept in mind that the purpose of the strategic simulation is not to provide exact numbers on what sales will be in the year 2022 given a certain scenario, but rather to make it clear to what extent that value can vary over all included scenarios. No simulation can eliminate uncertainty, but a good simulation will make the implications of uncertainty more transparent.

Qlikview does not support these simulations directly, but using the workaround of external calculation, the user-friendly interface Qlikview provides allows convenient selection of values and assumptions as well as quick and appealing visualization of results.

In an upcoming case study, we will look at the same business background implemented in a very different database and interface environment.

Dr. Holm Gero Hümmler

Uncertainty Managers Consulting GmbH

One Reply to “The Role of Databases for Strategic Planning – Case Study in Qlikview/Oracle”

Comments are closed.